The 80% Trap: Build vs Buy Got Harder, Not Easier

Coding agents made the first 80% cheap to build. The last 20% is as hard as ever. A four-axis framework for build-vs-buy decisions in the era of AI.

I'm the CTO of Minoa, an AI platform for B2B sales teams. Which means I'm running the build-vs-buy decision more or less weekly — sometimes well, sometimes badly — and I've been watching how the answer is shifting under us.

There's a take making the rounds: agentic coding tools have made the cost to build collapse, so build more, buy less. It's the kind of thing that feels right because the demo is so impressive. You watch Claude Code or Cursor produce a working prototype in 20 minutes that would have taken a sprint, and you naturally extrapolate. If everything is this cheap to build, why pay for software?

The take isn't wrong. It's just the easy half of the truth. The harder half is what actually matters when you're making the decision — and the way I see operators getting it wrong now is different from the way they got it wrong two years ago.

What actually changed on the build side

Three things shifted, not one.

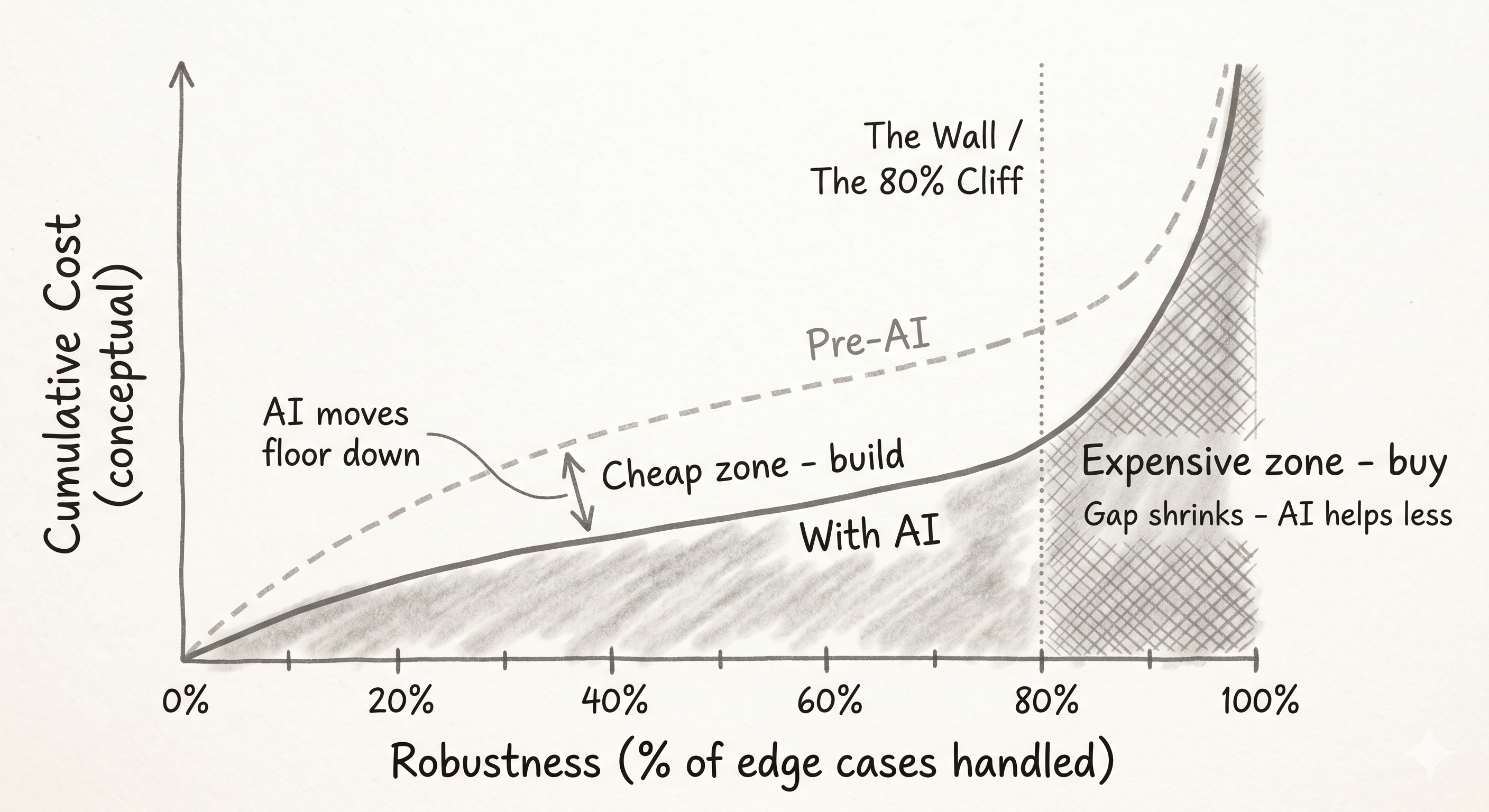

1. The cost to get to 80% collapsed. The cost to get to 100% didn't.

This is the most important point and the one that gets glossed over. Coding agents are excellent at producing the first cut of something — a dashboard, a CRUD app, a CSV importer, an internal tool, a one-off script. The first 80% of functionality is close to free now.

The last 20% — the edge cases, the auth model, the data validation, the failure modes, the "what happens when a user does X with non-ASCII characters in a Safari tab on a flaky connection" — is roughly as hard as it always was. Sometimes harder, because the agent confidently produced code that looks right but isn't, and you spend the same amount of time as before tracing why.

So the right question isn't "can we build this?" It's "what level of robustness do we actually need, and where on the 80 → 100% curve does that put us?"

A weekend script that one person uses? 80% is fine. Build it. The thing that processes payments, holds customer data, or sits in a critical path? You need 100%, and the gap from 80 to 100 will eat you.

The 80/20 boundary is also a moving target. What's 80% today is 95% in 18 months. So this isn't a static rule — it's a question you'll be re-asking as the floor of "good enough" keeps rising.

Jason M. Lemkin made a related point recently that I think nails the intuition. He's vibe-coded ten production apps with 750k+ uses. He still doesn't recommend you build the things you depend on:

What happens when one participant's connection drops mid-session? When Chrome updates break the WebRTC implementation? When someone is on a VPN that blocks peer-to-peer connections? Riverside has an engineering team handling hundreds of edge cases I would never think of until they break live on air.

That's the 80/20 wall in plain language. He'd vibe-coded the easy 80% of a podcast recording tool over a weekend. The other 20% — the edge cases that show up live, in front of customers — is what an entire software engineering team handles for him.

2. The cost to maintain went up, even as the cost to build went down.

When you let an agent ship a system fast, you borrow against future maintainability. The code might pass tests today. It might run fine for six months. But when something breaks, or a dependency updates, or you need to extend it, you're back in the codebase — now without the original context, often without good tests, sometimes with patterns no engineer on the team would have chosen.

The honest TCO calculation isn't "how long did it take to build." It's "how long did it take to build, plus the expected cost of every change over its useful life, plus the variance on that cost."

Vibe-coded systems have wide variance. Sometimes maintenance is fine. Sometimes you're rebuilding it in a year. You don't know which until you're in it. The risk-adjusted maintenance cost went up even if the median didn't.

3. "Half-built" is now the worst quadrant.

The failure mode I see most often: a team builds 80% of something, hits the wall, decides to keep limping along while also evaluating commercial alternatives. They end up paying twice — operating an unfinished internal tool while also paying for the software they should have bought from the start. The build was supposed to save money. It cost more, and now there's a sunk-cost argument keeping the half-built thing alive.

What changed on the buy side (the part that gets missed)

If your mental model of buy vs build is calibrated to 2022, you're also wrong about buy. Three things moved there too.

1. Software vendors got AI-accelerated.

The tool you would have bought 18 months ago is meaningfully more capable today. Vendors get to amortize AI investment across thousands of customers. You don't. The "buy" target isn't standing still while "build" moves toward it — both are moving, and often the buy target moves faster.

2. Integration cost collapsed.

The classic argument against software used to be: buy means six months of integration hell. MCP, agent glue, and well-designed APIs have made that meaningfully less true. The friction of plugging a vendor into your stack is lower than it's ever been. This systematically favors buy for anything plug-and-play, and the gap is widening.

3. Reversibility went up.

Build commitment used to be high — hire a team, lose two quarters, ship something. Now you can prototype an alternative in a day. That doesn't mean "build everything." It means the cost of trying something dropped close to zero, and the rate at which you should be running build-experiments is up.

The decision framework that actually works now

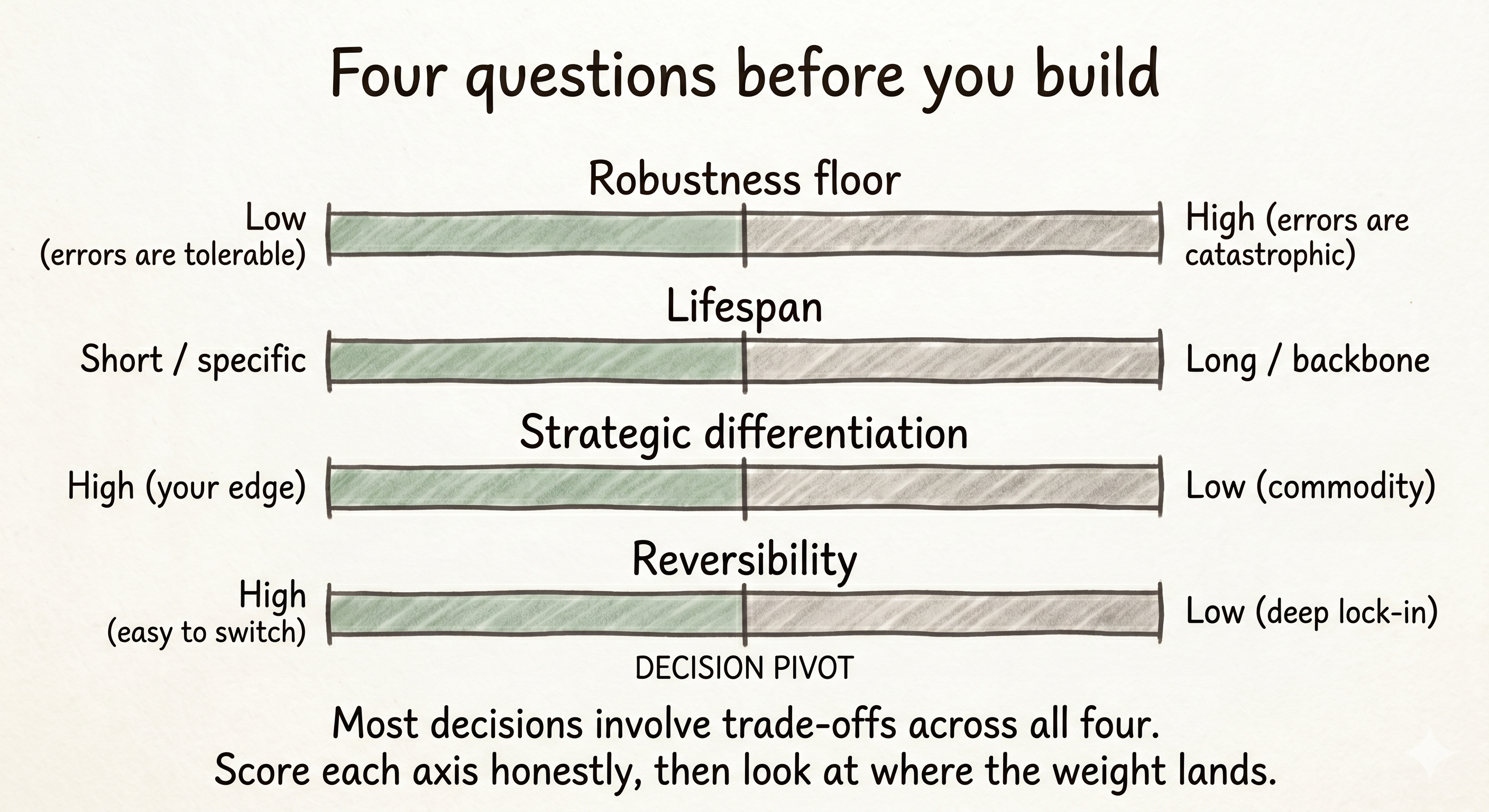

If "is it cheap to build?" is no longer a useful question, here are four axes that are.

1. Robustness floor.

How wrong can this be before it costs you? High floor (auth, payments, customer data, anything in a critical path) → buy, almost always. Low floor (internal scripts, one-off analyses, throwaway tools) → build, and quickly.

We buy auth at Minoa. Auth wrong is catastrophic — one bad day exposes everyone's data, and no agent is going to think through every OAuth edge case the way an Auth0 or Firebase team has. The robustness floor is too high. Same logic for anything in a regulated stack, any system of record, anything where "almost right" still ends with a customer call.

2. Lifespan.

How long does this need to live? Short-lived or highly specific → build. Long-lived backbone → buy.

The internal tools we've built and kept are the ones with a clear, narrow purpose and a clear expiration date. The ones that drifted into being "permanent infrastructure" without anyone deciding that's what they were are the ones that bit us later.

3. Strategic differentiation.

Is this the thing you're trying to be uniquely good at?

The old rule of thumb was "buy backbone." That rule was calibrated to a world where building backbone was prohibitive. It isn't anymore. Stripe built their own payments infrastructure when conventional wisdom said buy. The next generation of differentiated companies will do the same for things their predecessors had to buy. The right rule isn't "buy backbone" — it's "buy commodity, build differentiation, even when differentiation looks like backbone."

The corollary, which Lemkin makes well: build the 10% that gives you a genuine edge. Use other people's products for the other 90%. The trap is that vibe coding makes the 90% tempting too.

4. Reversibility.

How hard is it to switch later? High reversibility → build to learn, knowing you might switch. Low reversibility (deep data lock-in, organizational dependencies, anything that becomes culturally load-bearing) → be more cautious. Slow down on lock-in decisions.

The honest answer to most build-vs-buy questions is: it depends where you land on these four. The teams that consistently make the right call are the ones who actually score the decision against all four, instead of letting "build is cheap now" do the whole argument.

A worked example: where it gets uncomfortable

The framework is clean in theory. In practice, the calls that matter are the ones where the axes pull in different directions.

A real one we ran recently: conversational data analytics. We piloted a best-in-class commercial tool for eight weeks. It was good. The integration was clean, the UI was thoughtful, the team behind it was strong. It was also five figures a year.

Then one of us spent a day building something on top of our existing data warehouse using an agent. The output was 80% as good. Not 95% — 80%. There were rough edges. It wasn't pretty.

But here's what I had to be honest about: we don't need 95%. We need to get the right answers to a small number of recurring questions, fast, with the team that already exists. The robustness floor for our use case is lower than the floor the vendor is solving for, because they're building for thousands of customers including ones who need 95%. We aren't one of them yet.

So we built. And I'll tell you what I told the team: this is a judgment call, and we should revisit it. If the use case expands, if more people start depending on it, if the rough edges start costing real time — we buy. The decision isn't permanent. It's the right call for now, and "for now" is a phrase I'm comfortable saying out loud rather than pretending the decision is settled.

That's the part that's hard to write into a framework. Sometimes the answer is "build, and put a calendar reminder to re-evaluate in six months." That's not weakness. It's how reversible decisions should work.

The same logic ran for a few other internal tools — an internal product marketing assistant, a voice-of-customer artifact one of the team built in Claude over an afternoon. None of these are mission-critical. None of them touch customer data. None of them need to be 100%. All of them save real hours every week. Build was the right call.

Try cheap, commit expensive

The most useful shift isn't "build more" or "buy more." It's that the cost of trying dropped to near zero, while the cost of committing didn't.

That's a different game from the one we played two years ago. Two years ago, "let's try building this" meant a real engineering investment — you had to be pretty sure before you started. Now you can have a working prototype before lunch. The right response is not to build more production systems. It's to run more experiments and let most of them die.

What that looks like in practice:

- Set a kill criterion before you start. "If this isn't getting weekly use by three people in 30 days, we delete it." Without one, every prototype drifts toward becoming permanent.

- Time-box the build. The agent will happily let you spend two weeks on what should have been two days. Cap it.

- Keep a clear separation between "experiment" and "production." Experiments don't get on-call rotation, don't get backups, don't get integrations. The moment they do, you're committing.

- Be honest about graduation. If something does graduate from experiment to production, that's the moment to re-run the four-axis framework. Most of the time, the answer is "now we should have bought."

The teams that win this transition aren't the ones that build everything because the agent is fast. They're the ones who use the speed of agents to learn faster, while staying ruthless about what actually deserves a place on the roadmap as a real, owned, maintained system.

The actual question

Cheap to build is not the same as worth building.

Two years ago, "can we afford to build this?" was a real question that filtered out a lot of bad ideas for free. That filter is gone. The new question — the one you have to ask deliberately, because nothing else will ask it for you — is: even if this is cheap, should we own it?

For most things, the answer is still no. The vendors are good. They're getting better. The integration cost is dropping. Your team has better things to do.

For the small set of things where the answer is yes — the genuine differentiators, the short-lived experiments, the workflow glue that no one else will build for you — go fast. That's where the speed of agents actually matters.

The rest is a trap dressed up as productivity.

Ready to get started? Book a demo to see Minoa in action.